Choosing the Right Project Management Approach

Choosing the Right Project Management Approach

Project management methodologies define the success of IT projects. While Agile offers flexibility and adaptability, Waterfall provides structure and predictability. The key to effective IT project execution is selecting the right approach based on project scope, team dynamics, and business objectives.

## Introduction

Choosing between Agile and Waterfall is a critical decision in IT project management. Agile promotes iteration and flexibility, while Waterfall emphasizes structured phases and documentation. Understanding the strengths and limitations of each helps organizations optimize project execution.

## 1. Agile: Flexibility & Rapid Adaptation

Agile methodologies (Scrum, Kanban) enable teams to iterate quickly, making adjustments as needed.

- Best for dynamic projects with changing requirements.

- Focus on collaboration and continuous feedback.

- Delivers incremental value rather than waiting until project completion.

## 2. Waterfall: Structured & Predictable Execution

Waterfall follows a linear approach where each phase is completed before moving to the next.

- Ideal for well-defined projects with fixed requirements.

- Clear documentation & compliance for regulated industries.

- Strong project control with defined scope and timelines.

## 3. When to Choose Agile vs. Waterfall

### Use Agile when:

- Requirements are evolving.

- Speed and flexibility are critical.

- Frequent stakeholder feedback is required.

### Use Waterfall when:

- Project scope is clear and stable.

- Compliance and documentation are key.

- The budget and timeline must be strictly controlled.

## 4. Hybrid Approaches: Best of Both Worlds

Many organizations adopt a Hybrid model, combining Agile’s adaptability with Waterfall’s structure.

- Agile for development phases.

- Waterfall for planning & compliance.

- Ensures both flexibility and predictability.

## Conclusion

No single approach fits all projects. IT leaders must align methodologies with project scope, risk tolerance, and business needs. A well-chosen framework maximizes efficiency, mitigates risks, and drives successful project delivery.

Cutting IT Costs Without Cutting Value

Cutting IT Costs Without Cutting Value

IT budgets are constantly under pressure, yet organizations cannot afford to compromise on performance and innovation. Cost-cutting in IT should focus on optimization, efficiency, and strategic investments rather than just reducing expenditures. This blog explores practical strategies to cut IT costs while maintaining high value, ensuring that businesses stay competitive without sacrificing critical services.

## Introduction

Cost management is a top priority for IT leaders, but reducing expenses without losing value is a challenge. Many businesses struggle with rising infrastructure costs, vendor contracts, and inefficient operations. The key to sustainable cost reduction lies in strategic decision-making and technology optimization.

## Benefits

- Efficiency Gains: Streamlining infrastructure and automating workflows reduces operational overhead.

- Better Vendor Management: Renegotiating contracts and leveraging bulk purchasing improves cost efficiency.

- Cloud and Hybrid Solutions: Moving workloads to the right environment saves costs without performance loss.

- Optimized Resource Utilization: Eliminating underused software and hardware prevents unnecessary spending.

## Challenges

- Resistance to Change: Stakeholders may hesitate to adopt new cost-saving measures.

- Hidden Costs in Cloud & Licensing: Mismanaged cloud expenses and complex licensing structures can offset savings.

- Balancing Innovation with Savings: Reducing costs shouldn’t limit technological advancements.

## Conclusion

Cutting IT costs without cutting value requires a strategic approach, combining efficiency, smart vendor management, and technology optimization. Stay tuned for deeper insights into cost-saving techniques in upcoming posts!

EmergEdge A New Frontier in IT Insights

EmergEdge A New Frontier in IT Insights

The IT industry is evolving rapidly, with innovations in cloud computing, project management, cost optimization, and data center strategies reshaping the way organizations operate. EmergEdge was created to serve as a knowledge hub for IT professionals, offering practical insights, best practices, and expert perspectives. This blog will explore the latest trends, challenges, and solutions to help businesses stay ahead in an increasingly digital world.

## Introduction

In today’s fast-evolving technological landscape, IT professionals face constant challenges in keeping up with advancements, optimizing costs, and managing projects effectively. Recognizing this gap, EmergEdge was born—a platform dedicated to sharing insights on IT technologies, project management, IT cost optimization, and data center strategies. This blog aims to serve as a knowledge hub, offering practical guidance and expert perspectives on these critical areas of IT.

## Benefits

A platform like EmergEdge offers several advantages to IT professionals, organizations, and technology enthusiasts:

- Keeping Up with IT Innovations: From cloud computing to AI-driven automation, IT landscapes are rapidly changing. Having a curated source of insights ensures professionals stay ahead.

- Project Management Best Practices: Effective IT projects require structured methodologies like Agile, Waterfall, or DevOps. This blog will dive into real-world applications to enhance delivery success.

- IT Cost Optimization: Managing IT budgets is more than just cutting expenses—it’s about maximizing value. Expect strategies on vendor management, procurement efficiency, and cost-saving techniques.

- Data Centers & Infrastructure Excellence: As businesses migrate to hybrid and cloud-based models, understanding the future of data centers, security, and scalability is crucial.

## Challenges

While the benefits of emerging technologies are clear, organizations often struggle with:

- Technology Overload: New tools and platforms emerge daily, making it difficult to choose the right solutions.

- Budget Constraints: IT leaders must balance innovation with financial responsibility, ensuring investments align with business goals.

- Project Execution Hurdles: Poor planning, resource constraints, and changing requirements often lead to project delays or failures.

- Security & Compliance Risks: With rising cyber threats and regulatory requirements, organizations must stay vigilant in protecting their data and systems.

## Conclusion

EmergEdge is designed to bridge the gap between IT strategy and execution. Whether you’re an IT leader, project manager, or tech enthusiast, this blog will provide actionable insights, best practices, and expert advice to help navigate the complexities of modern IT.

Stay tuned for upcoming posts as we explore the latest trends, cost-saving techniques, and innovations shaping the future of IT. Welcome to EmergEdge—where IT knowledge meets transformation!

Future-Proofing Your Data Center Strategy

Future-Proofing Your Data Center Strategy

As IT infrastructure evolves, businesses must rethink their data center strategies to accommodate cloud, hybrid, and on-premise solutions. The future of data centers lies in scalability, security, automation, and cost-efficiency. With the rapid adoption of AI, edge computing, and hybrid cloud models, organizations must take a proactive approach to modernizing their infrastructure. This blog explores how companies can future-proof their data centers to ensure long-term success in an ever-changing technological landscape.

## Introduction

Data centers are the backbone of modern IT operations, housing the critical infrastructure that powers businesses worldwide. However, the traditional approach to data center management is becoming obsolete as enterprises move towards hybrid, multi-cloud, and edge computing models. The shift is driven by the need for greater agility, scalability, and efficiency while maintaining robust security and cost control.

Organizations that fail to evolve their data center strategies risk facing performance bottlenecks, security vulnerabilities, and rising operational costs. Future-proofing your data center involves strategic planning, modernization efforts, and adopting emerging technologies that can handle future IT demands.

## Benefits

A well-planned data center strategy ensures that organizations stay agile, competitive, and resilient in the face of technological disruptions. Below are some key benefits of future-proofing your data center:

### 1. Scalability & Flexibility

With the rapid growth of big data, AI workloads, and remote work, companies need data centers that scale effortlessly. Future-proofing your infrastructure ensures:

- Cloud & Hybrid Solutions: The ability to dynamically scale workloads between on-premise and cloud environments.

- Containerization & Microservices: Technologies like Kubernetes allow flexible, lightweight application deployments.

- Edge Computing Integration: Deploying resources closer to users to reduce latency and enhance real-time processing.

### 2. Cost Optimization & Energy Efficiency

Rising energy costs and environmental concerns push organizations to adopt efficient and sustainable data center models. Strategies include:

- Green Computing Initiatives: Using renewable energy sources and energy-efficient hardware.

- AI-Driven Resource Management: Automating cooling and power usage based on workload demand.

- Server Consolidation: Reducing excess hardware to minimize operational expenses.

### 3. Enhanced Security & Compliance

Cybersecurity threats continue to evolve, making it critical to fortify data centers against attacks. Future-proofing involves:

- Zero-Trust Architecture: Ensuring continuous authentication and verification for users and devices.

- AI-Powered Security: Using machine learning to detect anomalies and prevent breaches.

- Regulatory Compliance: Meeting GDPR, HIPAA, and ISO 27001 standards to avoid fines and reputational damage.

### 4. Automation & AI-Driven Management

As IT workloads grow, manual management becomes unsustainable. Automating infrastructure can:

- Reduce Downtime: AI-driven predictive maintenance helps identify failures before they occur.

- Optimize Workloads: Automated load balancing improves resource allocation.

- Self-Healing Systems: AI-powered automation restarts and reconfigures services automatically in case of failures.

## Challenges

Despite the clear benefits, modernizing a data center comes with its own set of challenges:

### 1. High Migration Costs & Complexity

Moving workloads from legacy systems to cloud and hybrid models can be expensive. Organizations must:

- Conduct cost-benefit analyses before migrating.

- Implement a phased migration strategy to reduce downtime.

- Train IT teams on cloud-native architectures.

### 2. Security Risks in a Hybrid Environment

Hybrid and multi-cloud environments introduce new security risks, such as:

- Data leakage due to misconfigured cloud settings.

- Expanded attack surface across multiple environments.

- Compliance challenges in managing data across jurisdictions.

### 3. Ensuring Business Continuity During Transition

Shifting to a modernized data center can disrupt operations if not managed carefully. To mitigate risks:

- Implement disaster recovery (DR) and backup plans.

- Use redundant architectures to minimize service interruptions.

- Test infrastructure updates in sandbox environments before deployment.

## Conclusion

A future-proof data center is more than just an IT investment—it’s a strategic advantage. By integrating scalable infrastructure, security enhancements, automation, and cost-effective energy solutions, organizations can stay ahead of technological shifts.

To remain resilient and competitive, businesses must:

- Adopt hybrid and cloud solutions for agility.

- Leverage automation and AI to improve efficiency.

- Enhance security measures to protect critical assets.

- Optimize costs with energy-efficient strategies.

The future of IT infrastructure is here—will your data center be ready? Stay tuned for more insights on IT transformation and infrastructure evolution in upcoming blogs!

Beyond Budgets – How IT Cost Control Drives Strategic Decision-Making

Beyond Budgets – How IT Cost Control Drives Strategic Decision-Making

IT cost control is often viewed as a budgeting exercise—but in reality, it’s a strategic lever for business transformation. When approached correctly, cost control becomes a tool for prioritizing investments, improving operational efficiency, and fueling innovation. In this post, we explore how IT leaders can use cost control to align IT spending with broader business goals.

## Introduction

Many IT departments face the challenge of "doing more with less." The pressure to reduce spending while delivering greater value is constant. However, cost control isn't about slashing budgets—it's about spending smarter. When managed strategically, IT cost control unlocks new opportunities, enhances visibility, and enables better decision-making at every level.

## 1. Visibility is the Foundation of Control

You can’t control what you can’t see. The first step to IT cost control is gaining end-to-end visibility into where money is going.

- Use ITFM (IT Financial Management) tools to track costs by service, application, or department.

- Tag and categorize cloud and infrastructure spending.

- Build dashboards that show real-time budget vs. actual spend.

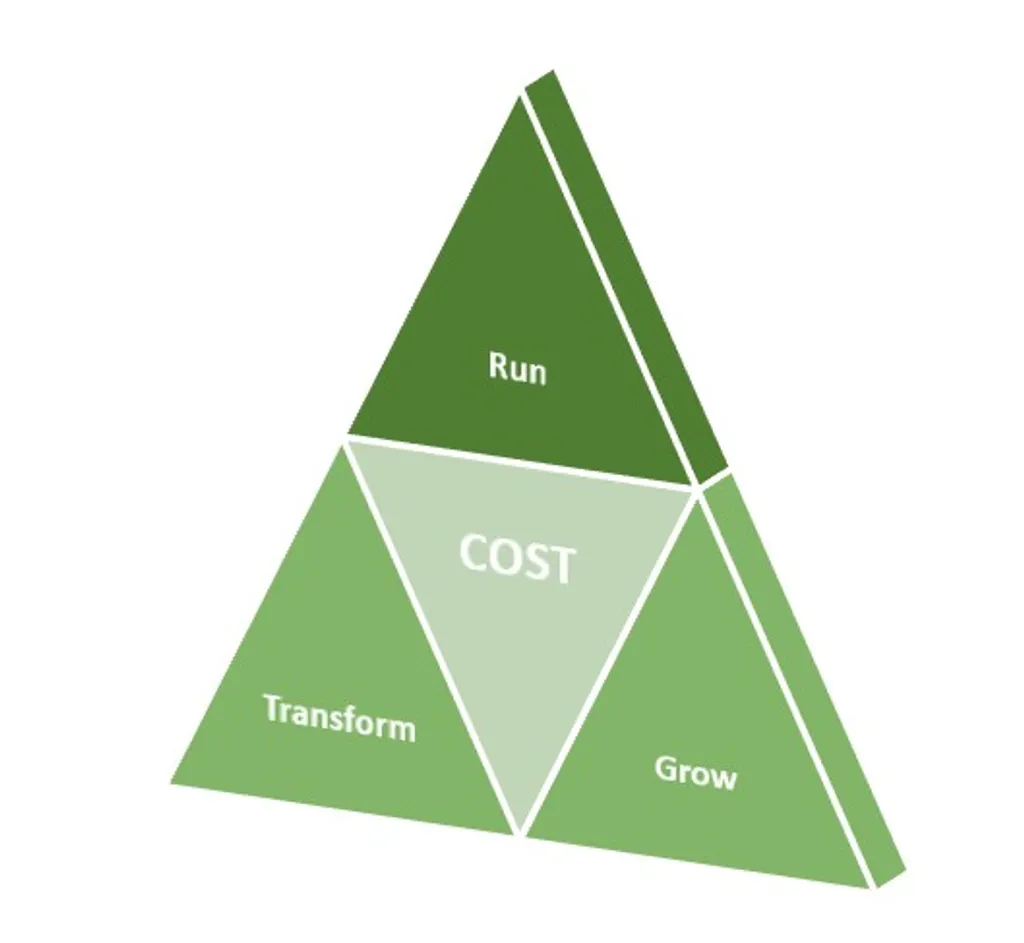

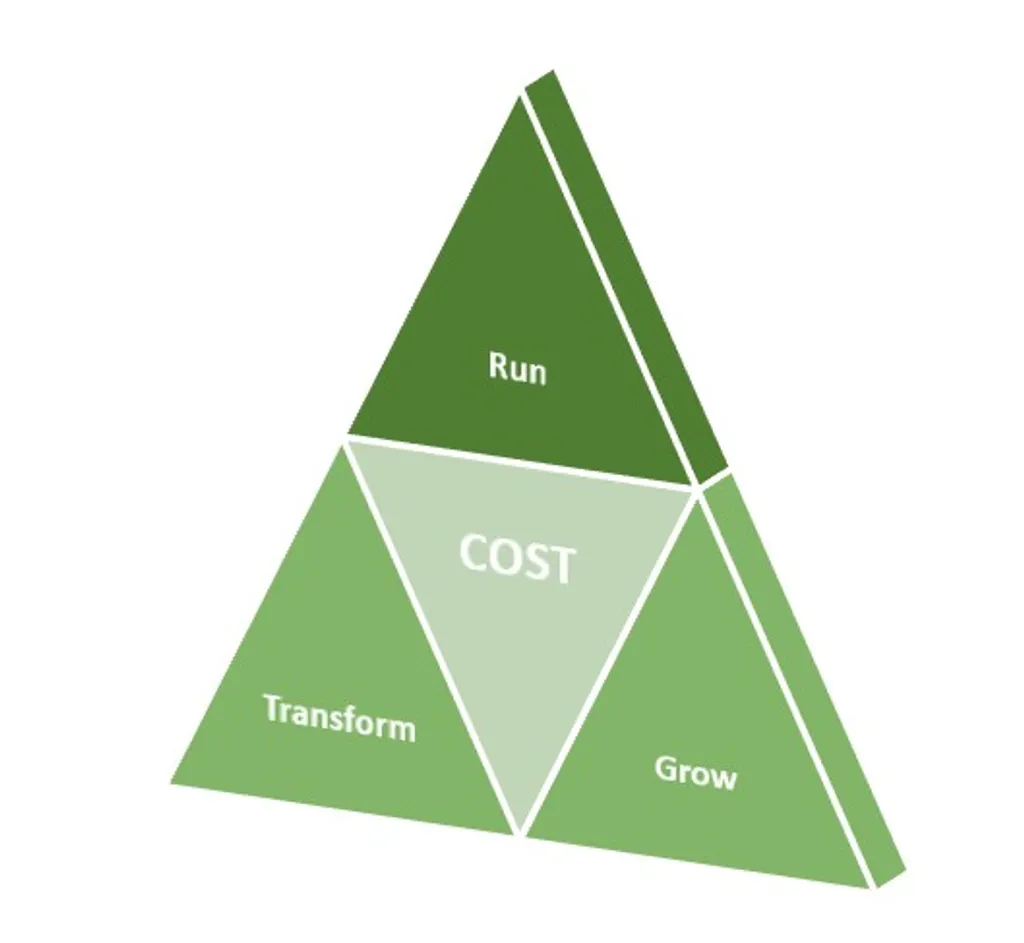

## 2. Classify Spending Into Value Buckets

Not all IT costs are equal. Categorize spending to identify:

- Run costs (keeping the lights on)

- Grow costs (scaling services and improving user experience)

- Transform costs (investing in innovation and new capabilities)

This framework helps leaders align spending with business outcomes and redirect funds from low-value to high-value initiatives.

## 3. Control Cloud & SaaS Sprawl

Cloud is one of the largest—and most variable—IT cost drivers.

- Right-size instances, remove unused resources.

- Review SaaS subscriptions—many are unused or duplicated.

- Implement FinOps practices to bring financial accountability to cloud teams.

## 4. Vendor Strategy as a Cost Lever

Vendors can be strategic partners—or a drain on budgets.

- Consolidate vendors where possible.

- Renegotiate contracts with performance-based incentives.

- Regularly benchmark pricing and SLAs against competitors.

## 5. Cost Control Enables Innovation

Strategic cost control frees up budget to invest in new technologies:

- AI, automation, and analytics to improve decision-making.

- Edge computing and IoT in data-driven environments.

- Cybersecurity and compliance investments to reduce long-term risk.

## Conclusion

Cost control is more than just cost-cutting—it’s an essential discipline for aligning IT with the business. When cost transparency, value alignment, and strategic decision-making come together, IT leaders can drive transformation and innovation while maintaining financial discipline.

Smart IT cost control turns financial pressure into a competitive advantage

Maximizing IT ROI – Smart Cost-Saving Strategies Beyond Budget Cuts

Maximizing IT ROI – Smart Cost-Saving Strategies Beyond Budget Cuts

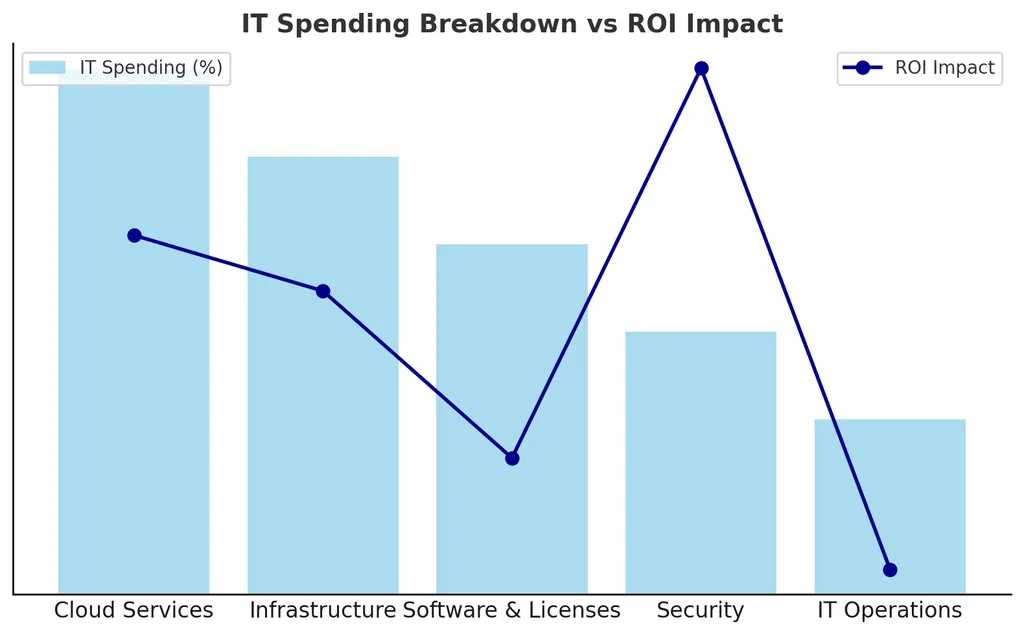

Cost-cutting alone doesn’t equate to long-term IT savings. Instead, businesses must focus on maximizing ROI by optimizing operations, vendor contracts, cloud workloads, and asset lifecycles. A data-driven approach to IT spending ensures efficiency without compromising service quality or innovation.

## Introduction

As IT budgets face increasing scrutiny, CIOs and IT leaders must rethink cost-saving strategies beyond traditional budget cuts. The challenge isn’t just to spend less but to spend smarter—ensuring every dollar invested in IT delivers maximum business value. This blog explores practical strategies to optimize IT investments without sacrificing performance.

## 1. Optimizing IT Operations Without Downgrading Services

Many organizations cut IT budgets reactively, leading to reduced service quality, security risks, and operational inefficiencies. Instead, businesses should:

- Automate routine tasks to reduce manual workload (AIOps, RPA).

- Consolidate IT tools & platforms to avoid redundancy.

- Invest in proactive monitoring to prevent costly outages and downtime.

## 2. Cloud Cost Management Through Smarter Workload Distribution

Cloud costs are among the biggest IT expenditures. Instead of simply reducing cloud services, organizations should optimize workload placement and resource allocation:

- Leverage cloud cost analysis tools (FinOps) to identify wastage.

- Use reserved instances and auto-scaling to match real-time demand.

- Reassess multi-cloud vs. hybrid-cloud strategies based on cost efficiency.

## 3. Strategic Vendor Negotiations & Contract Optimization

Vendor contracts often include hidden costs and long-term lock-ins. IT leaders can negotiate better deals by:

- Benchmarking vendor pricing against industry standards.

- Seeking performance-based contracts (pay-for-value models).

- Consolidating vendor agreements for better pricing power.

## 4. Enhancing IT Asset Utilization & Lifecycle Management

IT hardware and software often get replaced prematurely, leading to unnecessary expenses. A structured asset lifecycle strategy can reduce costs:

- Extend hardware life with predictive maintenance.

- Leverage refurbished enterprise-grade equipment where feasible.

- Implement IT asset tracking to avoid underutilized resources.

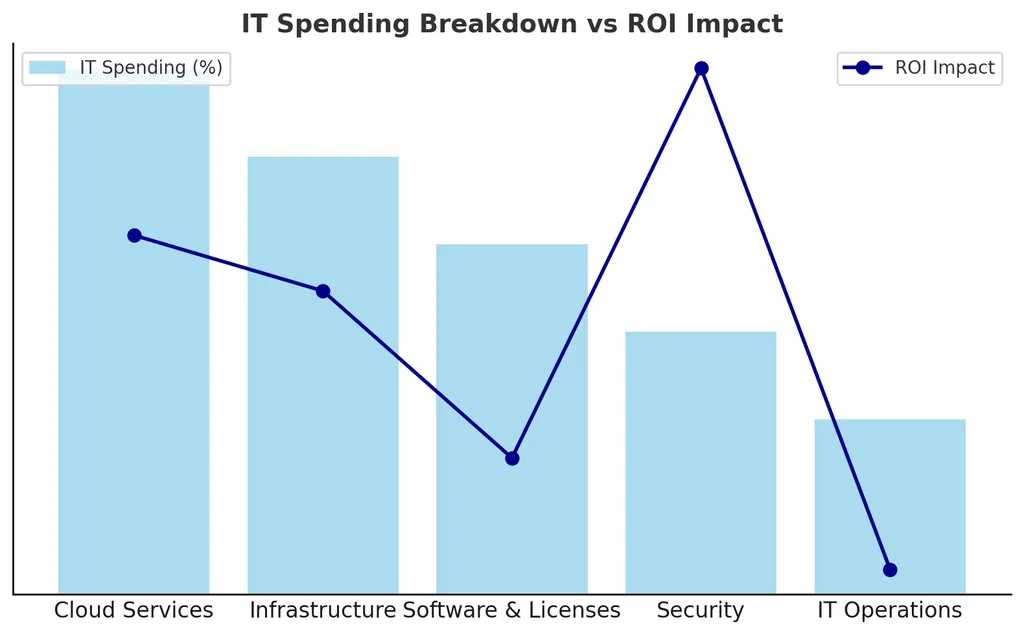

## 5. Data-Driven Decision-Making for IT Budget Efficiency

Cost-saving decisions should be based on data, not assumptions. Organizations should:

- Use analytics to track IT spending trends & ROI.

- Identify underperforming investments and reallocate funds.

- Develop a cost-optimization framework tied to business goals.

## Conclusion

The future of IT cost optimization is about balancing efficiency with innovation. By focusing on smart investments, strategic vendor management, and data-driven insights, IT leaders can ensure their budgets are optimized without compromising growth, security, or service quality.

The Rise of Edge Computing – How It’s Transforming Data Centers

The Rise of Edge Computing – How It’s Transforming Data Centers

Traditional data centers are under pressure as businesses demand faster processing, lower latency, and real-time analytics. Edge computing is reshaping the landscape by decentralizing processing, reducing bandwidth costs, and enhancing system responsiveness. Understanding its impact is crucial for IT decision-makers.

## Introduction

As AI, IoT, and real-time applications become mainstream, traditional cloud data centers face scalability challenges. Edge computing offers a decentralized alternative, processing data closer to the source to reduce latency and bandwidth dependency.

## 1. What is Edge Computing?

- ✅ Decentralized processing near data sources (IoT devices, sensors, etc.).

- ✅ Reduces latency for real-time analytics and automation.

- ✅ Minimizes bandwidth costs by reducing cloud data transfers.

## 2. Why Edge Computing is Critical for Modern IT Infrastructure

- 📌 Faster Decision-Making – Critical for industries like healthcare, manufacturing, and - smart cities. -

- 📌 Lower Bandwi- dth Costs – Reduces dependency on cloud-based data transfers.

- 📌 Enhanced Security – Processes sensitive data locally rather than in the cloud.

## 3. How Edge Computing Transforms Data Centers

- ✅ Hybrid Data Center Models – Combining centralized cloud with edge nodes.

- ✅ AI & IoT Integration – Processing massive real-time data streams.

- ✅ Scalability & Reliability – Expanding capacity without latency issues.

## Conclusion

Edge computing isn’t replacing traditional data centers—it’s augmenting them. Future-ready IT infrastructures must incorporate edge architectures to ensure agility, performance, and cost efficiency.

The Role of IT Project Management in Business Success

The Role of IT Project Management in Business Success

Effective IT project management is essential for delivering successful digital transformations, infrastructure upgrades, and software development. Without proper methodologies, projects risk budget overruns, delays, and misalignment with business objectives. This blog highlights the importance of IT project management, its benefits, and the challenges organizations face in execution.

## Introduction

As businesses rely more on technology, IT projects have become central to growth and operational efficiency. However, many projects fail due to poor planning, lack of resources, or weak execution strategies. Implementing strong project management frameworks ensures on-time, on-budget, and high-quality project delivery.

## Benefits

- Improved Execution & Delivery: Clear timelines, roles, and milestones reduce project risks.

- Better Resource Allocation: Ensures teams work efficiently and within budget.

- Alignment with Business Goals: Ensures projects contribute to overall company success.

- Risk Mitigation: Identifying potential failures early prevents costly rework.

## Challenges

- Scope Creep: Uncontrolled expansion of project requirements.

- Lack of Communication: Misalignment between stakeholders leads to project delays.

- Budget Constraints: Ensuring financial efficiency without compromising project quality.

## Conclusion

Strong IT project management ensures strategic execution, better efficiency, and high-value delivery. By adopting the right methodologies, businesses can maximize success. Stay tuned for practical insights on IT project methodologies in upcoming posts!

Why Microsoft Abandoned Its Successful Underwater Data Center Project

Why Microsoft Abandoned Its Successful Underwater Data Center Project

In a surprising move, Microsoft has decided to retire Project Natick—its ambitious, futuristic underwater data center initiative. Despite its promising performance and environmental benefits, the project will not see a commercial rollout. This post explores the history of Project Natick, its outcomes, and why Microsoft ultimately chose to move on.

## Introduction

In 2015, Microsoft launched one of the most daring infrastructure experiments in data center history: Project Natick, an underwater data center initiative designed to test whether data centers submerged in the ocean could be more sustainable, efficient, and reliable than their land-based counterparts. Eight years later, the project has officially been shelved—despite proving successful on many fronts.

So what went right—and why did Microsoft walk away?

## The History of Project Natick

Project Natick began as a response to multiple challenges:

- The rising demand for low-latency data delivery.

- Growing concerns around energy usage and sustainability in data centers.

- The desire to deploy data centers closer to coastal population hubs.

### Phase 1 (2015):

Microsoft submerged a prototype off the coast of California. This capsule operated for 105 days and proved the feasibility of submersion without disruption.

### Phase 2 (2018-2020):

A larger vessel was placed 117 feet deep off the coast of Orkney Islands, Scotland. This version contained 864 servers and 27.6 petabytes of storage and was fully powered by renewable energy from wind and tidal sources.

The capsule operated for over two years without issues, outperforming traditional data centers in terms of reliability and sustainability.

## Outcomes of the Project

Project Natick was not a failed experiment—in fact, it was a remarkable success.

### ✅ Higher Reliability

Microsoft reported that the underwater data center had one-eighth the failure rate of its land-based counterparts. The reduced exposure to human error, corrosion, and temperature fluctuation contributed to this reliability.

### ✅ Environmental Efficiency

- Powered entirely by renewable energy.

- Naturally cooled by seawater, eliminating the need for traditional HVAC systems.

- Reduced carbon footprint and energy costs.

### ✅ Modular & Rapid Deployment

- Data centers could be manufactured, shipped, and deployed within 90 days.

- Ideal for regions with limited space or infrastructure.

### ✅ Proximity to Coastal Populations

- Almost 50% of the global population lives near the coast.

- Underwater data centers could reduce latency and improve connectivity.

## So Why Did Microsoft Abandon It?

Despite its many advantages, Microsoft quietly announced it would not pursue commercial-scale underwater data centers.

Here’s why:

### ❌ Scalability Limitations

- While modular, the pods had a fixed capacity and weren’t easily upgradable or serviceable.

- Scaling would require deploying many units in marine environments, adding logistical and environmental complexity.

### ❌ Maintenance Challenges

- Physical repairs meant bringing the entire unit back to the surface.

- Long-term maintenance and lifecycle planning were not as flexible as with land-based facilities.

### ❌ Regulatory and Environmental Hurdles

- Deploying in coastal waters requires governmental and environmental permissions.

- Potential ecological concerns and jurisdictional red tape presented barriers to global rollout.

### ❌ Cloud Strategy Shift

- Microsoft is doubling down on AI, hybrid cloud, and edge computing, which favor more dynamic, accessible infrastructure.

- Underwater pods, while innovative, don’t align well with the rapid scaling needs of AI model training and inference.

## Conclusion

Project Natick may be over, but it left a lasting impact. It proved that sustainable, resilient, and low-maintenance data centers are possible—even underwater. It offered insight into how modular design, renewable power, and remote operations can shape future infrastructure.

As Microsoft pivots toward AI and global edge computing, Natick will be remembered as an inspiring leap toward sustainable cloud infrastructure. Sometimes, even the most successful pilots don’t make it to production—but their lessons carry forward.

Why Project Governance Is the Missing Link in Most IT Failures

Why Project Governance Is the Missing Link in Most IT Failures

While methodologies like Agile and Scrum dominate the IT project management conversation, project governance is often the silent factor determining success or failure. Project governance isn’t about bureaucracy—it’s about structure, accountability, and strategic alignment. This post dives into how project governance can prevent common IT failures and build a foundation for scalable success.

## Introduction

Many IT projects fail—not due to technical issues or lack of methodology—but because of poor governance. Budgets spiral, timelines drift, and deliverables shift. These symptoms often stem from a lack of clear roles, decision-making frameworks, and strategic oversight. Strong governance is the backbone of successful IT delivery.

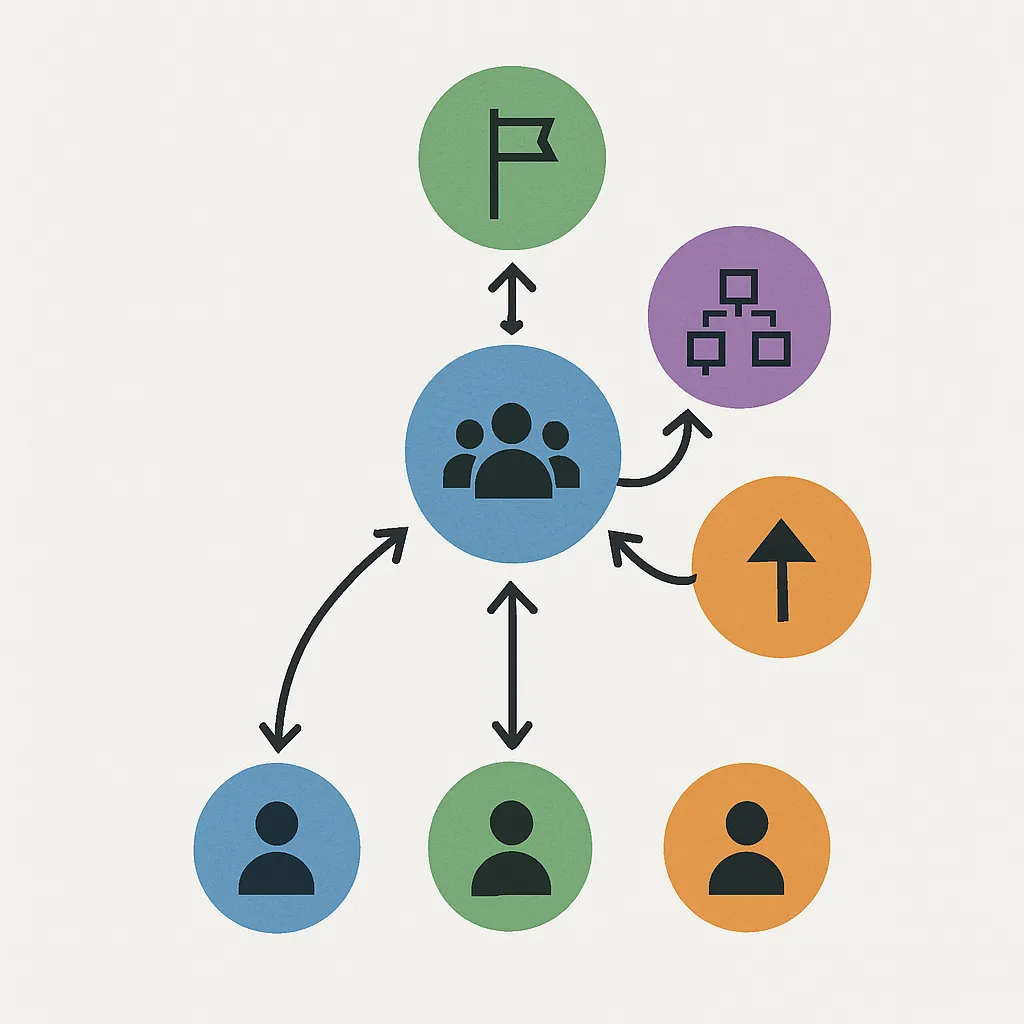

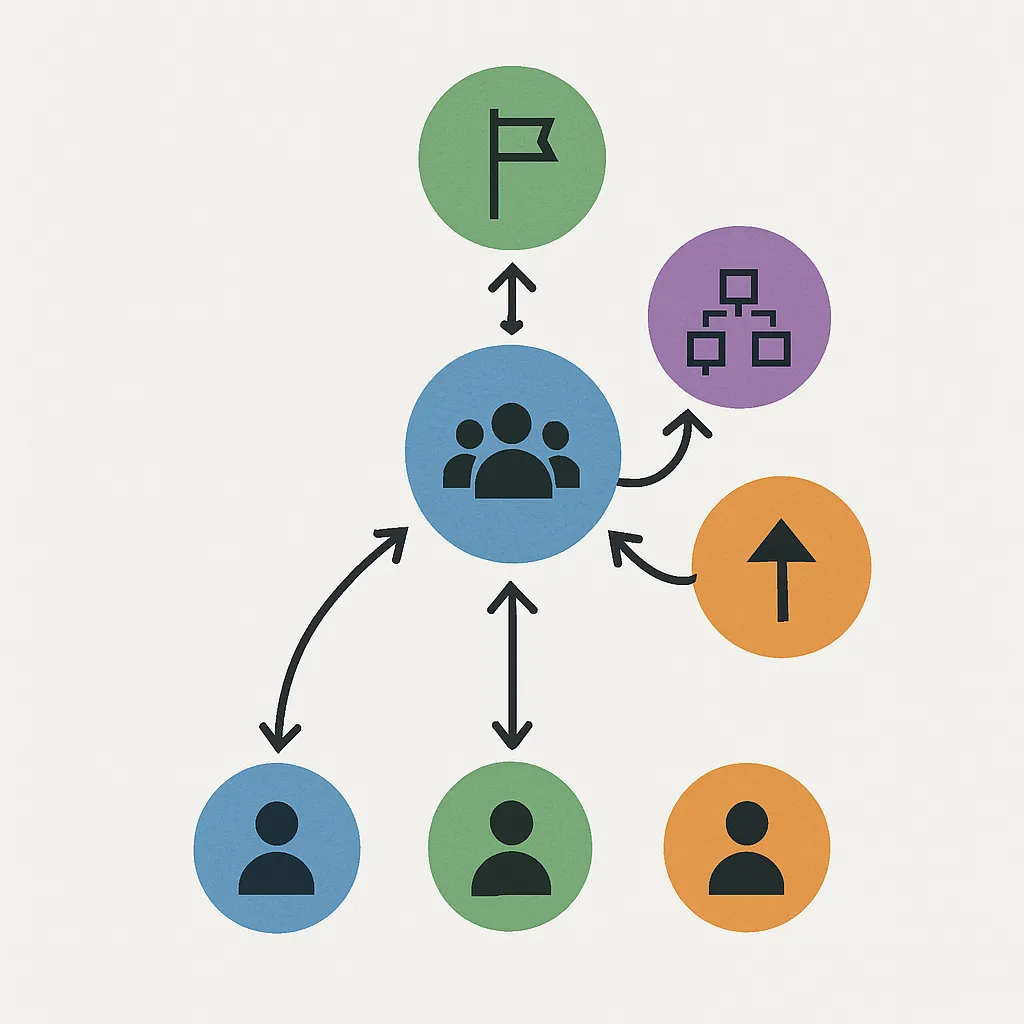

## 1. What Is Project Governance?

Project governance is the framework that guides decision-making, accountability, and control throughout a project lifecycle. It includes:

- Defined roles and responsibilities (sponsor, steering committee, PMO)

- Escalation paths for issues and risks

- Change control processes and performance metrics

## 2. Why Governance Often Gets Overlooked

- Focus shifts to delivery speed over structure.

- Teams assume Agile frameworks alone are enough.

- Governance is seen as administrative overhead.

In reality, a lightweight governance model supports agility while protecting projects from chaos.

## 3. Key Benefits of Strong Project Governance

- Clear Accountability – Everyone knows who makes decisions and owns outcomes.

- Strategic Alignment – Projects stay aligned with business goals and priorities.

- Early Risk Detection – Escalation paths and governance reviews surface issues sooner.

- Stakeholder Confidence – Transparent reporting builds trust with leadership.

## 4. Practical Governance Models

- Steering Committees – Provide strategic oversight and unblock major decisions.

- RAID Logs – Track Risks, Assumptions, Issues, and Dependencies.

- Stage Gates – Milestone reviews for scope, budget, and progress validation.

- PMO Involvement – Enable consistent practices and coaching across projects.

## 5. Balancing Governance with Agility

Governance doesn’t mean waterfall. Modern governance can be adaptive:

- Set guardrails, not gates.

- Use weekly health checks instead of rigid templates.

- Empower teams with decision rights within defined boundaries.

## Conclusion

If Agile is the engine, project governance is the steering wheel. Without it, IT projects veer off course. Strong governance ensures strategic alignment, stakeholder trust, and scalable delivery—without slowing innovation. It’s time governance got the attention it deserves in every IT project playbook.

Choosing the Right Project Management Approach

Choosing the Right Project Management Approach